Everybody likes to laugh about the Waterfall methodology.

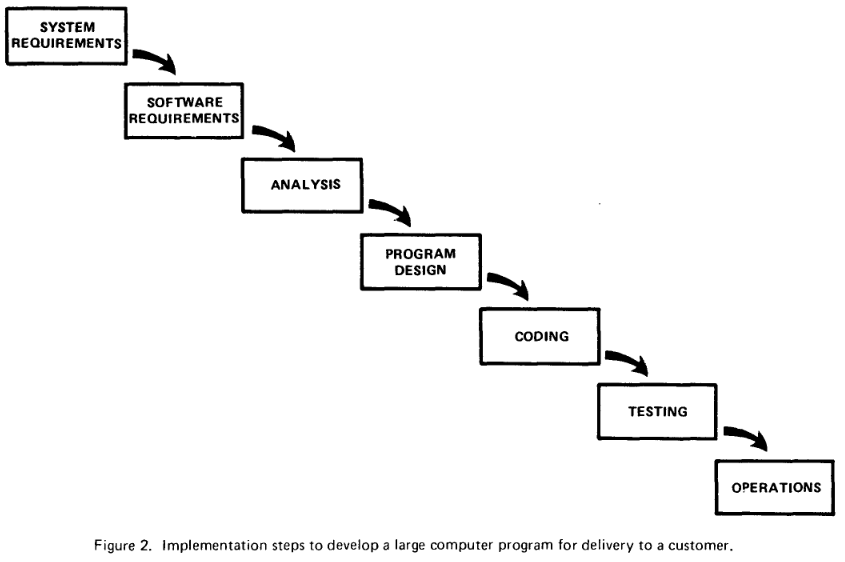

Where does it come from? In 1970, Winston Royce published "Managing the Development of Large Software Systems". It contains the following figure.

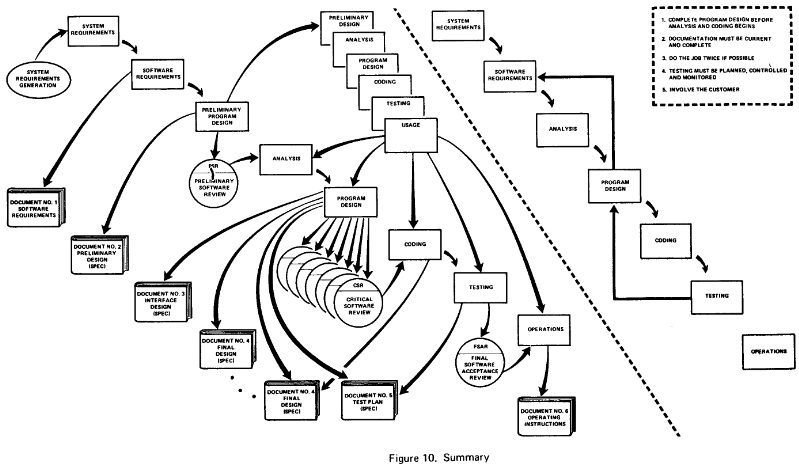

However, this is only figure 2 of 10. The paper continues by deriving a third figure:

Figure 3 portrays the iterative relationshop between successive development phases for this scheme. The ordering of steps is based on based on the following concept: that as each step progress and the design is further detailed, there is an iteration with the preceding and succeeding steps but rarely with the more remote steps in the sequence.

Royce identifies a need for iteration which is usually not associated with Waterfall. He continues:

I believe in this concept, but the implementation described above is risky and invites failure. The problem is illustrated in Figure 4. The testing phase which occurs at the end of the development cycle is the first event for which timing, storage, input/output transfers, etc., are experienced as distinguished from analyzed. These phenomena are not precisely analyzable. They are not the solutions to the standard partial differential equations of mathematical physics for instance. Yet if these phenomena fail to satisfy the various external constraints, then invariably a major redesign is required. [...] In effect the development process has returned to the origin and one can expect up to a 100-percent overrun in schedule and/or costs.

This concludes his observations what does not work. Afterwards comes the actual contributions of the paper, which are ideas on how to fix this process. It is a redesign and not a radical reboot like Agile. Here are quotes which sound more modern than 1970.

Test every logic path in the computer program at least once with some kind of numerical check. If I were a customer, I would not accept delivery until this procedure was completed and certified. This step will uncover the majority of coding errors.

In other words: 100% branch coverage.

For some reason what a software design is going to do is subject to wide interpretation even after previous agreement. It is important to involve the customer in a formal way so that he has committed himself at earlier points before final delivery. To give the contractor free rein between requirement definition and operation is inviting trouble.

Scrum also defines a formal way how we shall involve the customer: Primarily through priorizing stories at the Sprint planning. Also the "free rein between requirement definition and operation" is the problem well known today by outsourcing the implementation.

On Hacker News, nickpsecurity summarizes the paper:

He preempts Spiral/Agile, re-did diagrams for complexity of real-world development, discoveries of maintenance phase as hardest, legal battles over blame, buy-in by stakeholders, mock-ups to catch requirements/design issues early, noting that's necessary in first place, and so on. Reading it makes me wonder what the (censored) people were reading when they described waterfall as a static, unrealistic process?

In reality, it's so forward-thinking and accurate I might have to rewrite some of my own essays that were tainted by slanderous depictions of his Waterfall model.

Also, the word "waterfall" does not appear in the paper. It seems to appear first in 1976 in "Software requirements: Are they really a problem?" by Bell and Thayer.

It all went wrong at the DOD

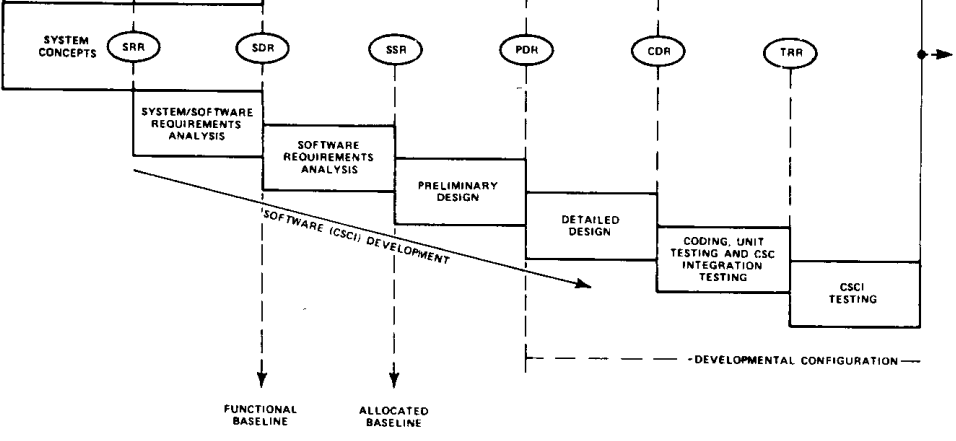

In 1985 the US Departement of Defense wanted a documented process for software development: DOD-STD-2167. Since governments and companies reused these requirements, it radiated across the world from there. It provided managers with a simple single question "Do you follow DOD-STD-2167?" to tick off the whole field of development methodology.

The depiction of Waterfall in there (part of figure 1):

This is where the bad reputation of Waterfall comes from although the word "waterfall" also not appear in there.

On Hacker News, gruseoms commented:

Although there are a great many blog posts on this subject, they mostly (including this one) are uncredited paraphrases of Craig Larman's excellent research on the history of iterative development. It was Larman who figured out that Royce's original paper described waterfall as what not to do, Larman who figured out the history of how the DoD adopted it anyway (basically, they didn't read the second half of the paper), and Larman who tracked down the guy who had been responsible for that decision. IIRC, they met for lunch in Boston and his first words to Larman were "I'm so sorry!"

While the quality of Larman's research is debatable, we definitely know that the DOD has learned since then. In 1994 MIL-STD-498 supersedes DOD-STD-2167. It proposes three different strategies:

- Grand Design which corresponds to the DOD Waterfall.

- Incremental which starts a full requirements analysis but uses multiple development cycles.

- Evolutionary which starts development without having all requirements.

The example decision process in the document selects "Evolutionary" for reasons like "Requirements are not well understood".

Modern software development isn't

It seems to be a myth that Waterfall dominated software development decades ago. I recommend to read read Craig Larmans Iterative and Incremental Development: A Brief History. He traces it back to the 1930s.

Well, certainly in some industries Waterfall works well, doesn't it? For example, when NASA develops code for space flights, they do take the time for rigorous requirements engineering, don't they? Larman describes Project Mercury, the first human space flight program of the US around 1960.

Project Mercury ran with very short (half-day) iterations that were time boxed. The development team conducted a technical review of all changes, and, interestingly, applied the Extreme Programming practice of test-first development, planning and writing tests before each micro-increment. They also practiced top-down development with stubs.

I conclude that ideas like iterations were actually the normal and intuitive way for software development from the start. The single-cycle Waterfall idea was an historic accident, which we as an industry have not overcome yet. There is some other force which insinuates to us that you should have your requirements down beforehand.

The underlying problem was nicely described by Frederick Brooks in 1986 (one year after DOD-STD-2167) in "No Silver Bullet":

Much of present-day software acquisition procedure rests upon the assumption that one can specify a satisfactory system in advance, get bids for its construction, have it built, and install it. I think this assumption is fundamentally wrong, and that many software acquisition problems spring from that fallacy.

I believe we build software at the complexity frontier our tooling and education allows. This implies that we are unable specify programs in enough detail and in advance. And if you do it roughly and afterwards, it will not match the code.

Now give a bit more respect to the greybeards. They knew this already decades ago and most of them probably identified DODs Waterfall as the accident it was right from the start.

Agile has a cost too. It isn't better in every way.

Model-View-Controller is also misunderstood.